Manifold API and Sensor Network: Two New Repos

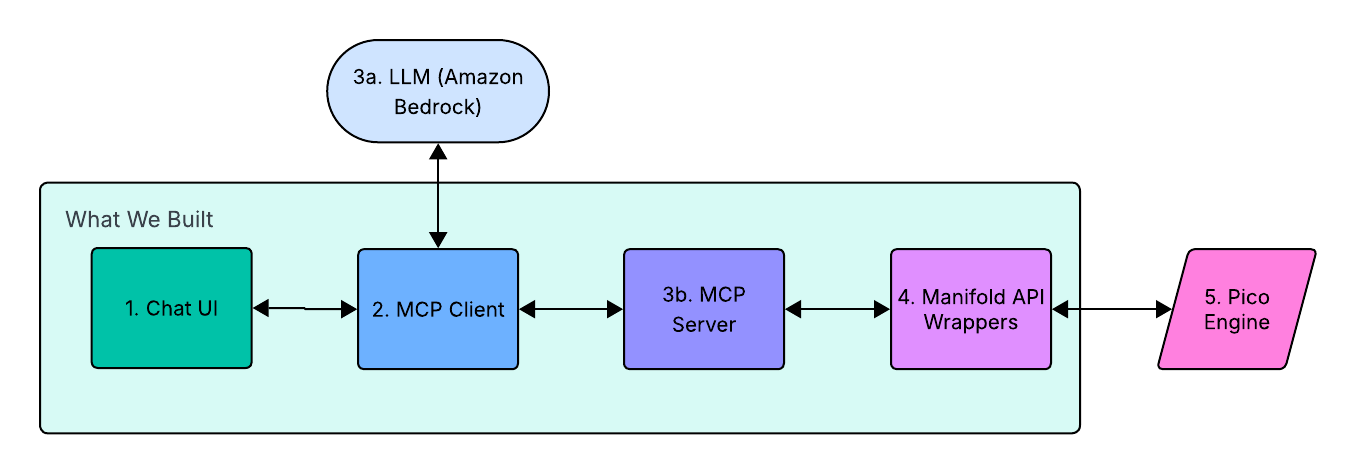

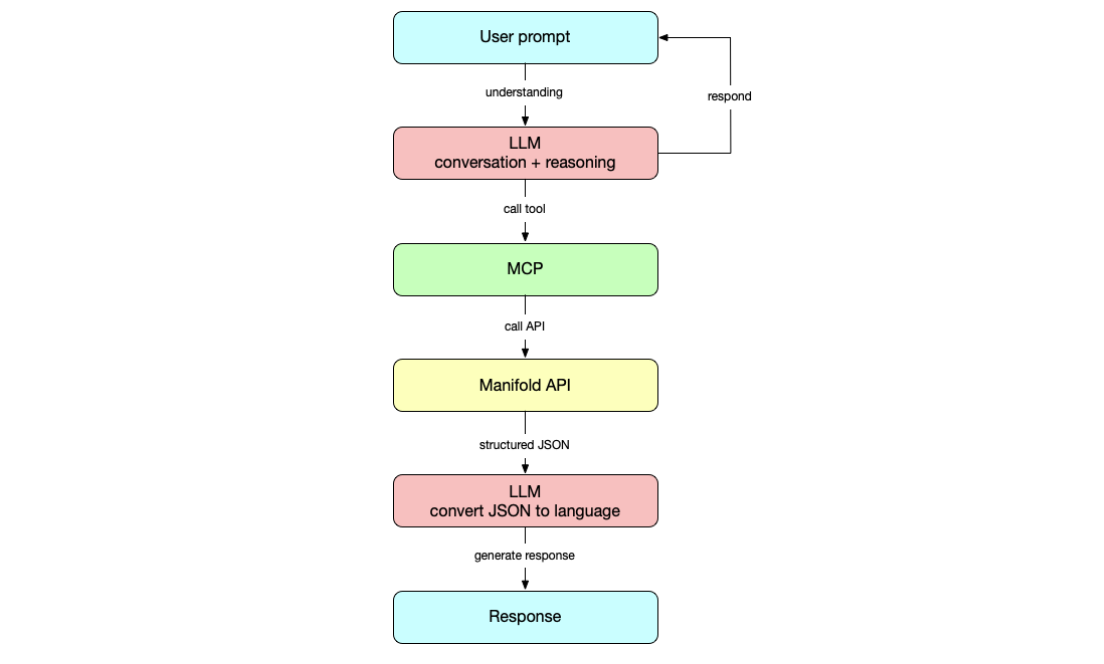

When I wrote about the BYU capstone project that built a conversational interface for Manifold, I glossed over something that had to happen first: the platform itself needed to be in shape before students could build a natural language layer on top of it. There were still some loose ends that needed to be cleaned up. That work is now complete, and I am releasing it as manifold-api on GitHub.

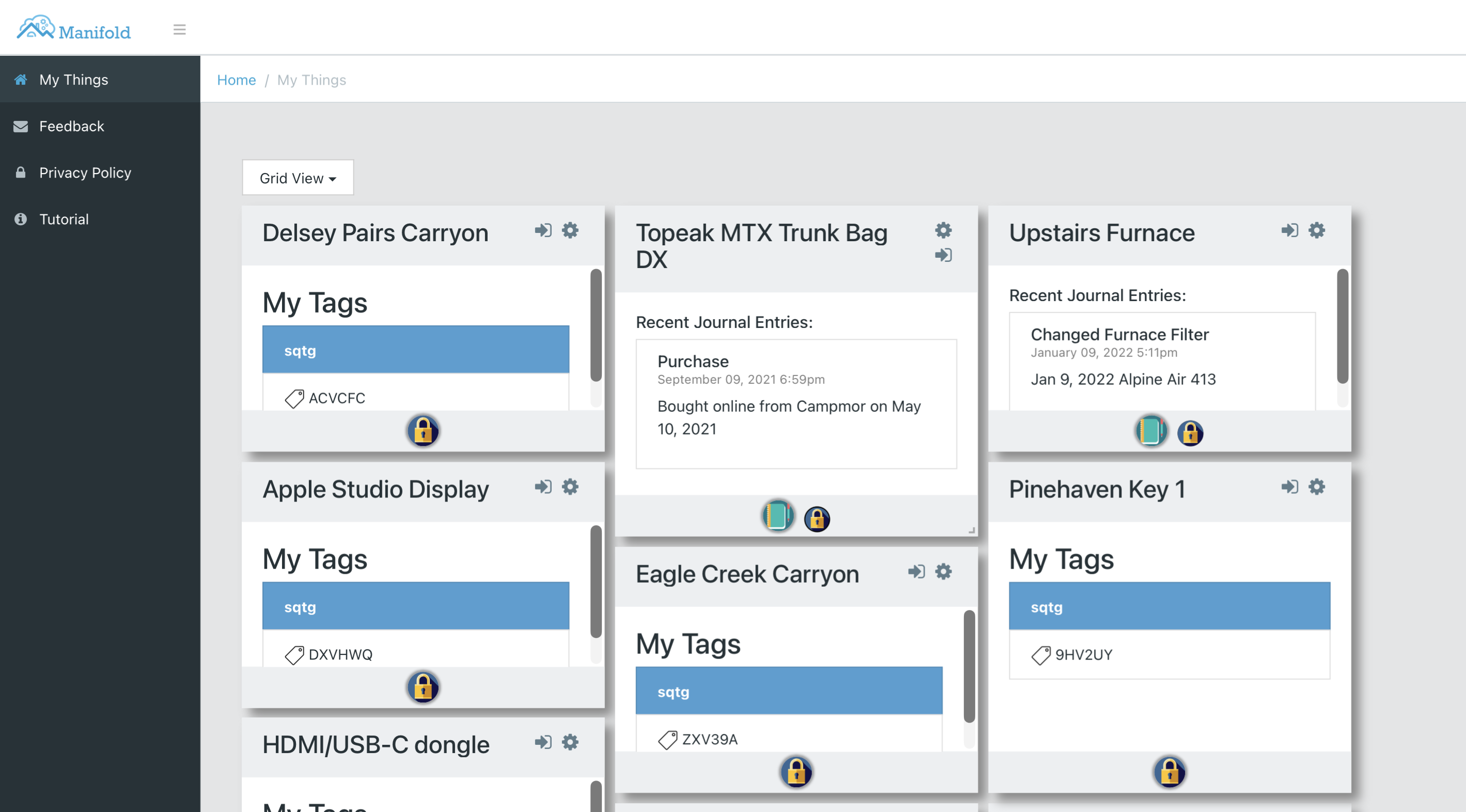

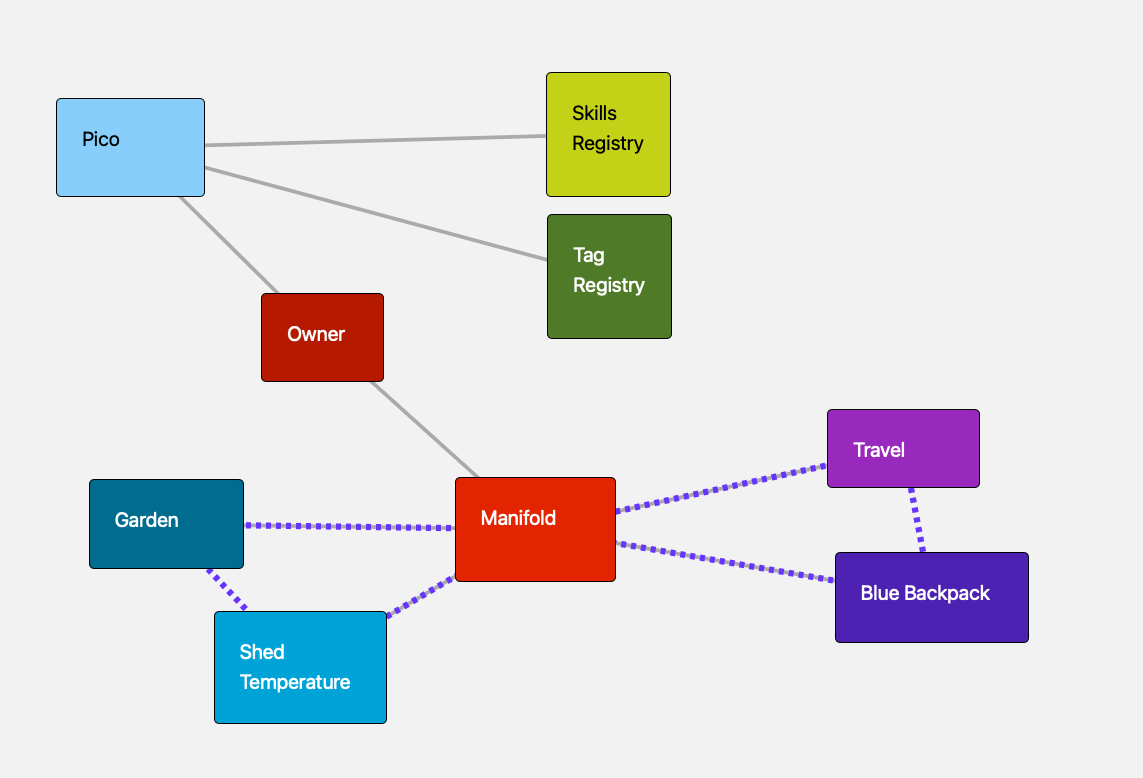

This update is the culmination of a pattern I have been refining across several projects. Fuse, the connected-car application I built years ago, organized its picos into communities that we called fleets. The temperature-network that monitors my pump house did the same thing with sensor devices and location groups. Manifold itself is built around that pattern. But each of these systems managed its own notifications, maintained its own pico hierarchy, and reinvented the same community lifecycle logic. The insight behind this update is that the community-of-picos pattern is general enough to be a framework; the domain-specific parts can be layered on top of the basic community logic. By giving Manifold's community pico a delegation interface and centralizing notifications on the Manifold pico, any domain repo can build its network of picos on a stable platform without duplicating the plumbing.

The biggest architectural change in this update is the notification platform. Previously, domain-specific rulesets called Twilio or Prowl directly. Each network managed its own credentials and delivery logic, which meant the same plumbing was duplicated across repos. The new approach centralizes everything on the Manifold pico: any thing or community can raise a manifold:add_notification event with a subject, message, and identifying attributes, and Manifold handles the fan-out to whichever channels are enabled for that pico (inbox, SMS via Twilio, push via Prowl). Notification channels are opt-in per subject, so a sensor community can enable SMS alerts without every other pico in the network generating noise. This is a cleaner separation of concerns, and it means domain repos no longer need to know anything about how the owner gets notified.

The other major addition is automated bootstrap. The old manual three-step initialization—create tag registry, create owner pico, register tag server—is now handled by a single bootstrap ruleset installed on the root pico. In practice this means spinning up a fresh Manifold instance goes from a sequence of API calls that had to be executed in the right order to a single ruleset install. The test harness depends on this; it would not be practical to run a clean Docker container for every test run if setup were manual.

Testing Against a Real Engine

The test harness in manifold-api is a TypeScript NPM package that spins up a standard pico-engine in Docker, mounts the repo's KRL files directly, runs bootstrap and lifecycle scenarios, then tears the container down. Because the engine mounts the KRL as file:// URLs, you can edit a ruleset and re-run without rebuilding the image; the iteration loop is fast. The npm test command runs the full suite: KRL syntax parse gate, Docker startup, bootstrap (tag registry, owner, Manifold pico), and thing/community create/add/remove/delete flows. The current scenarios give you a regression baseline before touching any of the core rulesets.

Sensor Network Moves Inside Manifold

Once the platform was solid, I looked at my old temperature-network repo—the one behind the Dragino LoRaWAN sensor network I put in place at a remote pump house—and saw an obvious refactoring opportunity. The original approach managed its own pico hierarchy independently of Manifold. That is no longer true. The new sensor-network repo replaces temperature-network entirely, rewriting all its rulesets to treat sensor communities and devices as ordinary Manifold community and thing picos.

The design is a clean layering. Manifold handles the pico hierarchy, subscription management, thing and community lifecycle, and notifications. The sensor network adds sensor-specific behavior on top. Installing io.picolabs.sensor.network_bootstrap on the Manifold pico is the only requirement to get started. From there, raising a sensor:create_community event delegates to Manifold's generic community machinery to create a sensor network community pico.

To create a new sensor, raising the sensor:initiation event on a community's sensor channel delegates to Manifold's thing creation with a callback. The community receives community:thing_created and finishes sensor-specific setup, installing the appropriate router ruleset for the sensor type, setting up threshold monitoring, and enabling the requested notification channels. Threshold alerts are routed using manifold:add_notification rather than calling Twilio or Prowl directly. The sensor-network rulesets do not know the details of how the owner gets notified.

Supported hardware today is Dragino LoRaWAN sensors: LHT65 (temperature/humidity), LSE01 (soil), LSN50 (multi-purpose), and WL03A-LB (water leak). Each sensor type gets a router ruleset that decodes payloads and raises sensor domain events. Adding a new sensor type requires registering it in io.picolabs.sensor.community and providing a router ruleset—the rest of the stack does not change.

Shared Test Infrastructure

The sensor-network test harness reuses manifold-api's infrastructure directly via dependsOn. When npm test runs in the sensor-network repo, it mounts both repos into a single pico-engine Docker container: manifold-api provides the platform rulesets, sensor-network provides the sensor-specific ones. The test suite bootstraps a full Manifold installation, creates a sensor community, initiates sensors for LHT65, LSE01, and LSN50, and tears everything down. Because the platform and the domain layer share a test container, integration failures between them surface immediately rather than waiting for production. A stable Manifold API means sensor-network's tests can focus on sensor behavior instead of re-testing platform primitives.

Future Work

Three areas are on the near-term roadmap. The first is bringing over the Personal Data Store (PDS) ruleset from Fuse and updating it for the Manifold model. The original PDS was more than a profile; it was a structured data contract for every pico, organizing state into a profile slice, a namespaced elements store for app and domain data, and a per-ruleset settings store. Rulesets wrote their configuration data using PDS events rather than touching entity vars directly, which meant the PDS owned the data and could enforce schemas, react to changes, and clean up on uninstall. The shared schema part is what made this useful: when a ruleset declared its data shape through the PDS, other rulesets and the platform could discover what that pico knew how to do and what data it held without hard-coding assumptions about what was installed where.

Right now Manifold has none of that. Profile and configuration data is scattered: wrangler stores a pico name in myself(), the Manifold pico stores names in its thing and community registries, and individual rulesets like SafeAndMine maintain their own contact info. Each domain repo works around the absence of a shared data contract by stitching together entity vars and event attributes on its own. A proper PDS ruleset installed on every pico would replace that sprawl with a single queryable API, give sensor-network a reliable way to describe its things, and, more importantly, give any future domain repo a foundation it can build on without reinventing storage conventions from scratch.

The second item is a Home Assistant integration. I have been running Home Assistant alongside my sensor network and the obvious next step is an API layer that lets Home Assistant read sensor state and trigger automations based on it. Home Assistant has a well-documented REST API model, and the Manifold thing and community queries map cleanly onto it; it is more a matter of building the bridge than solving a hard architectural problem. Longer term, I think we could recreate much of what the original Manifold web app provided—dashboards, thing management, notification configuration—directly inside Home Assistant, which already has a capable UI and a large ecosystem of integrations.

Further out, Manifold needs to support multi-tenancy and proper authentication. The current model assumes a single owner per engine instance, which works fine for a personal deployment but limits how broadly Manifold can be used. Proper authentication and richer authorization—controlling who can raise events and query state on which picos—is the deeper requirement. That is not something Manifold can solve on its own; it requires support from the pico engine. The engine would need to enforce identity and access control at the channel level before Manifold could reliably build multi-tenant behavior on top of it.

The pattern here—a domain repo that treats Manifold as a dependency and shares its test infrastructure—is intentional. Any pico-based application that needs communities, notifications, and thing management should be able to build on manifold-api without forking its bootstrap logic or reimplementing its notification plumbing. The goal is to make Manifold a framework that domain repos build on, not a collection of utilities that each repo copies. These two repos are the first concrete demonstration of that working end to end.

Photo Credit: Sensor network on Manifold from the sensor-network repository documentation (public domain)